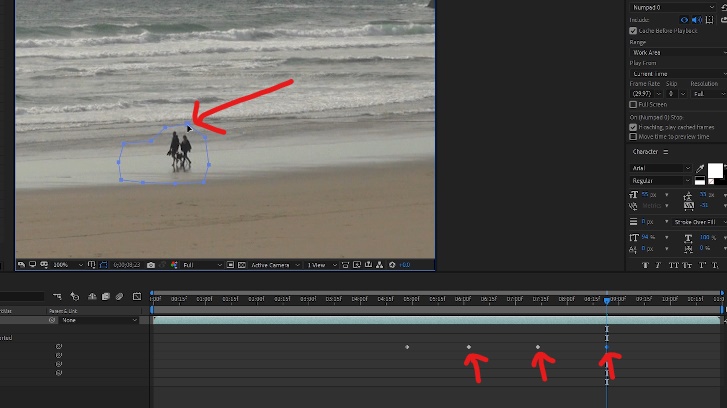

In Mocha you can create a second mask for objects passing in front of your first mask, and the software knows to ignore those pixels, allowing a seamless track even when the mask is interrupted. Maybe the feature isn't available yet, but ideally CAF would understand mask layers the way Mocha understands it for planar tracking. But that is cumbersome and introduces potentially unnecessary human error. So yes, the workaround is to use CAF all the way up until the person/object crosses, then to manually freeze the fill, copy that as a reference frame after the object has finished crossing, and then let CAF finish filling everything after that. It's only causing problems for a second or so whenever something crosses the mask. Then I'm using CAF to fill the patch in, which is also working swimmingly - it is much, much better at matching motion blur and lighting changes than if I were to build a fill layer out myself. I did manually roto out the foreground stuff, and I rotoed out the background stuff using Mocha.

Well I'm not really talking about roto here, I'm talking about the fill/patch. I know I could make a second reference frame after the object passes, but (a) the fill needs to be identical to the fill beforehand, not a new generated fill and (b) I still think the passing object would bleed into the fill layer in the frames around the passing moment. This worked okay a couple times, but it makes the transparency so large that the resulting generated fill layer isn't very good. That way all of those pixels are gone too, and the software only has the background pixels to look at for context. One trick I tried was to temporarily switch that second mask, the passing object, into a subtract mask as well. I'm masking out the object I want removed from the background (subtract mask) and then masking back in the objects passing in front of it (add mask) so that I have a perfect transparent mask around only the section I want to cover up. So the patch looks perfect right up until the person passes, at which point their clothing warps into the background patch. However, anytime a person or object passes in front of the mask, it grabs details from those passing pixels and incorporates them into the fill layer. “Through optimized performance and intelligent new features powered by Adobe Sensei, video professionals can cut out more tedious production tasks to focus on their creative vision.”Īnd here’s a bonus for Twitch streamers: they can now use Character Animator to create real-time animation, similar to many of the live animations you may have seen on the Colbert Show (which uses this tool).I've been using content-aware fill to great effect lately, especially in combination with a PS reference frame.

Meanwhile, production timelines are shorter and the list of deliverables are longer,” said Steven Warner, vice president of Digital Video and Audio at Adobe. Video is experiencing a golden age as video professionals across broadcast, film, streaming services and digital marketing are facing higher consumer demand for content creation. GPU rendering in Premiere can significantly cut down export times, for example, and mask tracking is now up to 38 times faster for 8K videos (and up to 4x faster for HD scenes). Some of those focus on workflow improvement, including the new Freeform Project panel that lets you arrange assets visually and improved audio tools (which now also feature Auto Ducking for ambient sound in Audition and Premiere Pro).Īs usual, Adobe also worked hard on improving the overall performance of its applications. With the annual NAB show coming up next week, Adobe is also launching a plethora of other video updates for both After Effects and Premiere Pro. If you need to fine-tune the result, you can also use Photoshop to create reference frames.Īdobe notes that content-aware fill is also a very useful tool for 360-degree VR projects, where you can’t always hide everything around the camera. If everything works as planned, the tools will automatically track the object through a scene (even when it moves behind another object for a bit) and replace it with more suitable pixels. To remove an object (or maybe a stray boom mic) from a scene is to mask it. The company notes that this new feature is powered by Adobe Sensei, the company’s AI platform. As you can imagine, doing this for a video is significantly harder because you have to do this for every image while the objects move. The company has long offered this feature in Photoshop, where you can use it to automatically remove objects from a photo, with the application filling in the blank space with appropriate pixels, based on what’s around it. Adobe today announced that it is bringing content-aware fill to After Effects, the special effects software that is part of its Creative Cloud offering.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed